You approved the budget for a generative search feature, your engineering team delivered a technically flawless product, and your telemetry now shows a usage graph that is completely flat. The capital expenditure has successfully converted into an ongoing operational liability. Across the enterprise software sector, organizations are discovering that integrating advanced large language models into legacy platforms is creating an unsustainable misalignment between product value and compute cost. When you build features that require users to engineer prompts just to retrieve their own data, you are not delivering an operational advantage. You are actively degrading their workflow while paying premium API rates for the privilege.

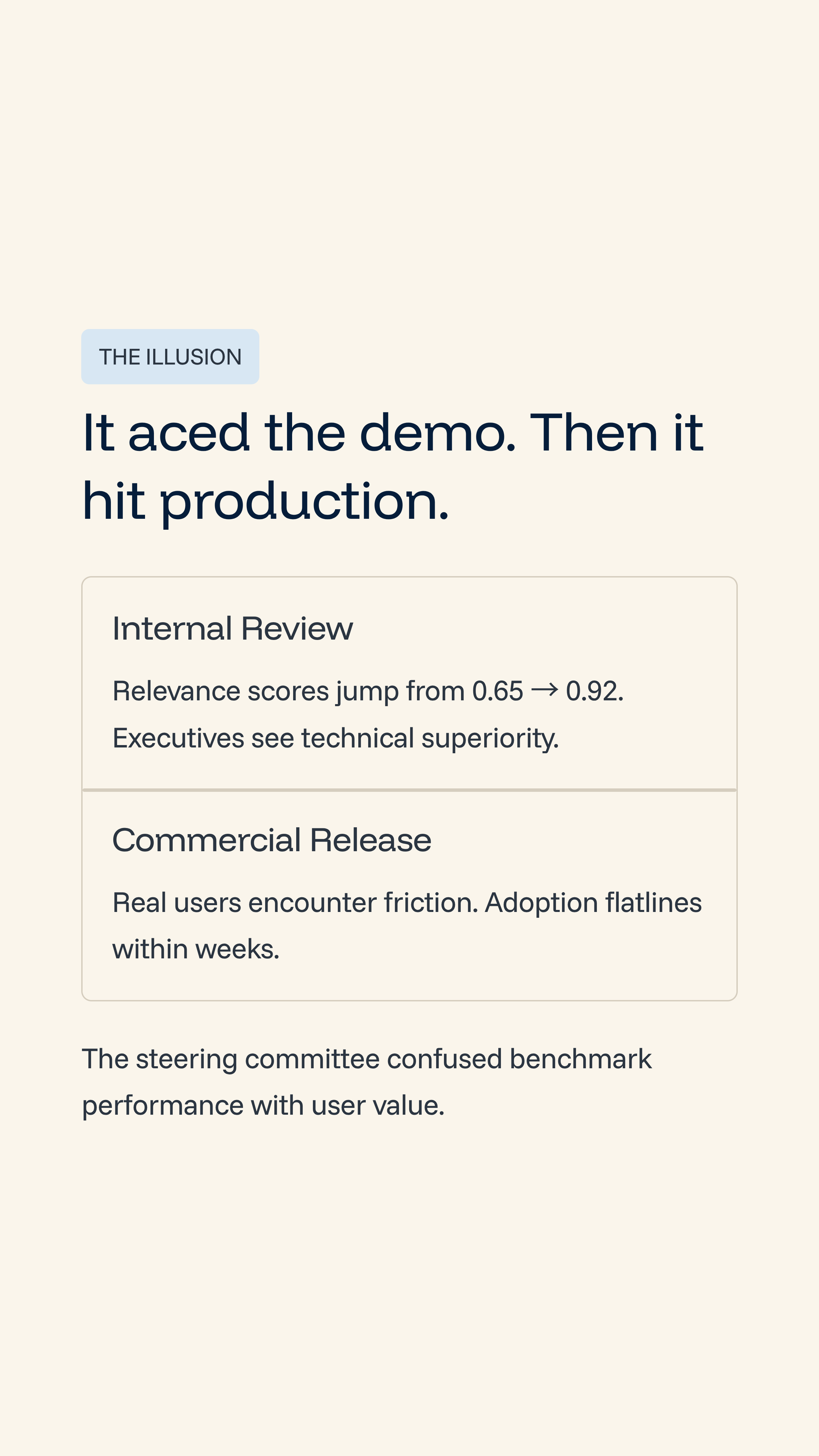

The Illusion of Technical Readiness

The current operational mandate for most product teams is to unlock the value of proprietary data using modern generative capabilities. The internal logic dictates that since customers have spent years depositing documents and logs into your platform, allowing them to query that data with natural language will automatically drive retention. Engineering teams spin up modern retrieval-augmented generation pipelines and integrate frontier models like Claude to process the requests.

During internal executive review sessions, these implementations perform flawlessly. A complex question is entered into a text box, the system processes the proprietary context, and a highly accurate summary is generated. Product managers present relevance scores often jumping from barely functional legacy metrics of 0.65 to near-perfect scores of 0.92 that dwarf legacy keyword search capabilities. From the perspective of the steering committee, the organization appears fully prepared to capture market share through technical superiority.

The Cognitive Friction of the AI Interface

This perception of success fractures immediately upon commercial release. The failure occurs because the development team fell in love with the technical mechanism of artificial intelligence rather than the actual operational outcome for the user. The organization fundamentally misunderstood the nature of the interface.

To utilize the new capability, a customer must interrupt their primary workflow. They are forced to navigate to an independent sidebar, formulate a precise text prompt, wait for the generation cycle, and then manually migrate the output back to their original workspace. The cost of this friction appears almost instantly. Adoption stalls because the cognitive load required to operate the tool vastly exceeds the perceived value of the retrieved information.

A Predictable Financial Drain

Consider a recently deployed, highly accurate generative interface designed to solve complex document retrieval for a SaaS platform. The initial rollout was internally celebrated by the product leaders.

By month two, telemetry revealed a harsh reality regarding the deployment:

The few remaining active users were highly technical individuals who enjoyed the novelty of the chat interface.

These power users were running highly complex, context-heavy queries multiple times per hour.

The company was paying variable, token-based compute costs of roughly $0.08 per query to the model provider.

The business was charging these users a standard, flat-rate software subscription of $29 per month.

If a power user queried the system just 15 times a day, the business was no longer making money; they were bleeding cash. The initiative created a localized financial drain while failing entirely to speed up the core workflow. They had built a Ferrari to deliver pizzas, but were only charging the customer for the pizza.

The Wrapper Fallacy

The fundamental driver of this failure is the wrapper fallacy. Users do not actually want to search for data. They want their tasks to be completed.

By forcing a customer to prompt an interface, the product has merely shifted the labor from manual searching to prompt engineering. The tool does not eliminate work. It replaces one form of manual effort with a new, unfamiliar form of manual effort. The interface acts as a destination when it should function as an invisible utility that operates silently in the background to automate the decision.

The Compounding Cost of Variable Compute

When this conceptual error scales across a user base, it creates severe structural risks for the business. The primary risk is uncapped financial liability. Software companies are accustomed to predictable gross margins built on fixed hosting costs. Generative tools introduce variable compute costs driven entirely by token consumption.

This pricing misalignment creates an uncapped liability on your balance sheet through several compounding vectors:

Token Consumption Escalation: Complex queries require significant vector compute and LLM tokens, driving up the cost of goods sold on every interaction.

Power User Subsidization: Bundling unlimited AI access into a standard flat-rate subscription means your most engaged customers become active liabilities that bankrupt the product.

Context Window Bloat: As users upload more documents, the context required to answer their prompts grows, inherently increasing the cost of every single API call over time.

The business is effectively subsidizing the operations of its most active users. This dynamic inverts traditional software economics.

Margin Compression and Strategic Vulnerability

This margin compression cascades into severe strategic vulnerabilities for the entire organization. Capital is actively tied up supporting features that function as novelties rather than critical infrastructure. These implementations are frequently categorized as copilots. They demo exceptionally well during procurement cycles, but they act as vitamins rather than painkillers.

For software-as-a-service companies, this directly impacts critical valuation metrics in three distinct ways:

Gross Margin Degradation: When the cost of goods sold increases unpredictably due to variable compute costs, gross margins dip. This makes it mathematically impossible to achieve standard operational benchmarks expected by the board.

Resource Misallocation: Engineering hours are spent maintaining a low-adoption AI wrapper instead of shipping core features that actually drive net-new revenue or expansion.

False Product-Market Fit: When these generative search wrappers go offline for maintenance, the silence from the user base is deafening. The customers simply revert to their previous, manual workflows, proving the feature is highly expendable.

Spending operating capital to build and support a feature that users will not miss when it breaks is an unpredictable and misaligned allocation of resources.

Implementing the Product Profitability Test

For the Chief Product Officer and the Chief Financial Officer, regaining control of these margins requires enforcing strict business viability gates before any engineering work begins. The organization must stop evaluating initiatives based purely on technical possibility. Leadership must implement a strict product profitability test to govern all future developments.

Before writing a single line of code, every AI feature must pass three non-negotiable hurdles:

The Value Test: The feature must prove it completely automates a workflow rather than simply shifting the labor to the user. If the AI writes a draft that the user must spend ten minutes editing, the labor is shifted, not reduced.

The Margin Test: The commercial model must protect the unit economics. Businesses must move away from flat-rate subscriptions for variable compute and implement strict fair-use caps or usage-based pricing models (credits) to cap their downside exposure.

The Retention Test: The product must prove it is a painkiller. If the feature can be removed without causing customer churn if they just go back to doing it the old way the development budget should not be approved.

The Executive Governance Imperative

The pressure to ship generative capabilities is creating highly unpredictable margin exposure across the sector. Treating artificial intelligence integration as a simple user interface update is a failure of executive governance. Organizations that fail to control the unit economics of their generative features face severe planning risks.

When a company misses its quarterly forward guidance because an isolated product team bundled unlimited token generation into a flat-rate subscription, the root issue is not the cost of the language model. The issue is a fundamental lack of financial discipline during product development. Building for strict margin control and true automation is the only sustainable path forward. It is always more cost-effective to kill a flawed product concept on a whiteboard than it is to shut down a cash-bleeding feature after it has already reached the market.